Introduction

Artificial Intelligence (AI) is rapidly becoming a cornerstone of modern engineering systems, powering innovations across transportation, healthcare, finance, and infrastructure. However, as AI systems become more embedded in critical operations, the need for robust security practices becomes increasingly urgent.

Unlike traditional software systems, AI introduces unique challenges due to its reliance on data, probabilistic decision-making, and continuous learning. These characteristics expand the attack surface and require a holistic, lifecycle-based security approach.

This article explores key AI security risks and outlines best practices to ensure resilient, trustworthy, and secure AI deployments.

Understanding AI Security Risks

AI systems are vulnerable at multiple levels. Some of the most critical threats include:

1. Adversarial Attacks

These involve subtle manipulations of input data designed to mislead AI models into making incorrect predictions. Even minimal changes can significantly impact outcomes.

2. Data Poisoning

Attackers may inject malicious or misleading data into training datasets, compromising model integrity and influencing behaviour.

3. Model Extraction and Inversion

Sensitive information can be inferred from trained models, or entire models may be reverse-engineered through repeated queries.

4. API Exploitation

Public-facing AI services can be abused through excessive querying, probing, or denial-of-service attacks.

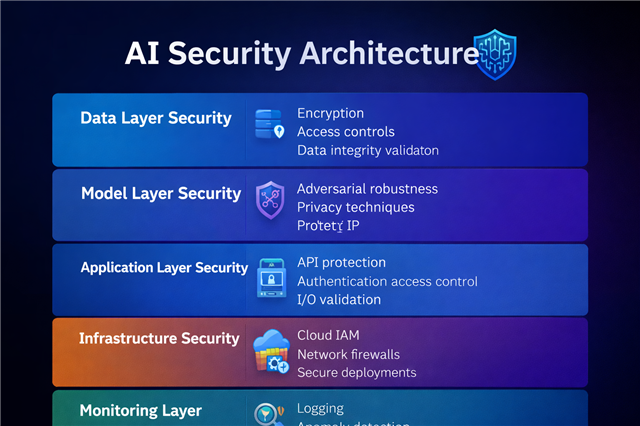

Securing the AI Lifecycle

Effective AI security must address every stage of the system lifecycle:

Data Collection and Preparation

- Validate data sources and ensure authenticity

- Apply encryption for data at rest and in transit

- Detect anomalies and eliminate bias where possible

Model Development and Training

- Use secure and isolated training environments

- Maintain strict version control and dataset lineage

- Implement adversarial training techniques

Model Evaluation

- Test models against adversarial inputs

- Evaluate robustness, fairness, and reliability

- Conduct security-focused validation testing

Deployment

- Secure APIs with authentication and rate limiting

- Apply access controls using role-based permissions

- Protect endpoints from unauthorized access

Monitoring and Maintenance

- Continuously monitor system performance and anomalies

- Detect model drift and unexpected behaviour

- Regularly update and patch vulnerabilities

️ AI Security Best Practices

To build secure AI systems, engineers and organisations should adopt the following strategies:

Secure Data Pipelines

Data is the foundation of AI systems. Protecting it is critical.

- Use strong encryption protocols

- Implement strict access controls

- Validate and sanitize incoming data

Robust Model Training

- Incorporate adversarial examples during training

- Use privacy-preserving techniques such as differential privacy

- Ensure reproducibility and traceability

Access Control and Zero Trust

- Apply Zero Trust principles across systems

- Enforce role-based access control (RBAC)

- Secure APIs with authentication and monitoring

Model Protection Techniques

- Use model watermarking to prevent theft

- Deploy models in secure enclaves where possible

- Limit exposure of model internals

Continuous Monitoring

- Implement logging and audit trails

- Detect unusual usage patterns

- Use anomaly detection systems

Governance and Compliance

Align AI systems with established standards:

- ISO/IEC 27001

- NIST AI Risk Management Framework

- GDPR for data protection

️ AI Security in Practice: Transport Systems

In intelligent transport systems—such as railway networks—AI is used for:

- Passenger flow prediction

- Scheduling optimisation

- Incident detection

Potential Risks:

- Manipulated data leading to incorrect predictions

- API abuse causing system disruption

- Exposure of sensitive operational data

Mitigation Strategies:

- Secure data ingestion pipelines

- Real-time anomaly detection

- Controlled access to operational dashboards

Emerging Trends in AI Security

The field of AI security continues to evolve with new innovations:

- Confidential AI – Secure computation environments protecting data during processing

- Federated Learning Security – Decentralised learning with privacy preservation

- Explainable AI (XAI) – Enhancing transparency and trust

- AI-driven Cybersecurity – Using AI to defend against cyber threats

Key Takeaways

- AI security must be integrated across the entire lifecycle

- Data integrity is the most critical security factor

- Continuous monitoring is essential for resilience

- Security practices must evolve alongside AI advancements

Conclusion

As AI systems become increasingly critical to engineering and operational environments, ensuring their security is no longer optional—it is essential.

A secure AI system is not achieved through a single control but through a layered, proactive, and adaptive approach. By implementing best practices across data, models, infrastructure, and governance, organisations can build AI systems that are not only intelligent but also trustworthy and resilient.